TL;DR

Reason-ModernColBERT (149M params) takes #1 on BrowseComp-Plus across accuracy (87.59%, +7.59 over previous best), recall, and calibration, outperforming retrievers 54× its size while using fewer search calls. Model, code, and data are all open.

In May 2025, Reason-ModernColBERT set a new standard on reasoning-intensive retrieval (BRIGHT) by outperforming every retrieval model up to 7B parameters despite being 45× smaller. Last month, LateOn-Code and ColGrep brought late-interaction models to code retrieval, topping the MTEB Code leaderboard and winning 70% of head-to-head comparisons against grep in coding agents, with models as small as 17M parameters.

A pattern is emerging: late interaction keeps delivering state-of-the-art results wherever agents need to search, whether it's reasoning-intensive documents, codebases, or multi-step research. Today, BrowseComp-Plus confirms it on the hardest agentic search benchmark available.

The BrowseComp-Plus benchmark

BrowseComp-Plus is built on OpenAI's BrowseComp, the reference benchmark for Deep Research evaluation. The Plus version introduces a fixed corpus of roughly 100,000 human-verified documents and 830 queries, each designed to take a human over two hours to answer. Same corpus, same queries, same evaluation for everyone, making it possible to isolate how much the retriever contributes to a Deep Research pipeline.

The results

Paired with GPT-5, Reason-ModernColBERT reaches 87.59% accuracy, a 7.59-point jump over the previous best.

Before this result, the best scores on each metric belonged to different models and different methods. Reason-ModernColBERT is the first to lead on all of them at once.

The leaderboard includes models up to 8B parameters, like Qwen3-Embed-8B. Reason-ModernColBERT outperforms all of them at 149M, 54× smaller, while requiring fewer search calls.

.png)

A minimal scaffold, a strong signal

BrowseComp-Plus offers two evaluation modes. The "standard" scaffold is a search function that returns the top 5 documents with the 512 first tokens of each. "Custom" scaffolds let teams build their own pipelines, and some go quite far: reinforcement-learned routing models, oracle-like chunk selection that only surfaces the most relevant passages.

Our custom scaffold is one additional function: get_document(id). The LLM reads ColBERT's retrieval results, decides which documents are worth reading in full, and requests them. No reranking model, no chunk oracle.

This works because ColBERT's late-interaction scoring produces token-level relevance signals. The LLM doesn't need to guess which documents matter: it has fine-grained evidence to base that decision on before committing to a full read. The effect is striking. Accuracy jumps from 79.52% to 87.59%, while search calls drop from 19.31 to 13.27. Better answers, less compute, from a single additional function.

But the standard scaffold result deserves just as much attention. At 79.52% accuracy and 83.52% recall, it already outperforms every other standard run on the board and matches the best custom ones. No get_document, no engineering tricks, just search.

Same story with open-source LLMs

We ran the same setup with gpt-oss-120b and the pattern holds. Reason-ModernColBERT beats Qwen3-Embed-8B regardless of scaffold configuration, even when the competitor uses a custom pipeline and we use the standard one.

We also tested GTE-ModernColBERT-v1, the general-purpose ColBERT model that serves as Reason-ModernColBERT's base before reasoning-specific fine-tuning. It still outperforms Qwen3-Embed-8B on several configurations. The reasoning fine-tuning sharpens the edge, but the late-interaction architecture does most of the heavy lifting on its own.

Why this changes the cost equation

Every search call in a Deep Research loop costs tokens, latency, and money. A better retriever reduces the number of iterations needed to reach the answer. A smaller retriever reduces the cost of each iteration. Reason-ModernColBERT does both: 149M parameters encoding at a fraction of the cost of an 8B model, and fewer round-trips to the LLM to get a better result.

Open and reproducible

Model weights, training code, and datasets are all open. Training takes a few hours and a few lines of code.

Models

- Reason-ModernColBERT: reasoning-intensive retrieval (149M)

- LateOn-Code: state-of-the-art code retrieval (17M / 149M)

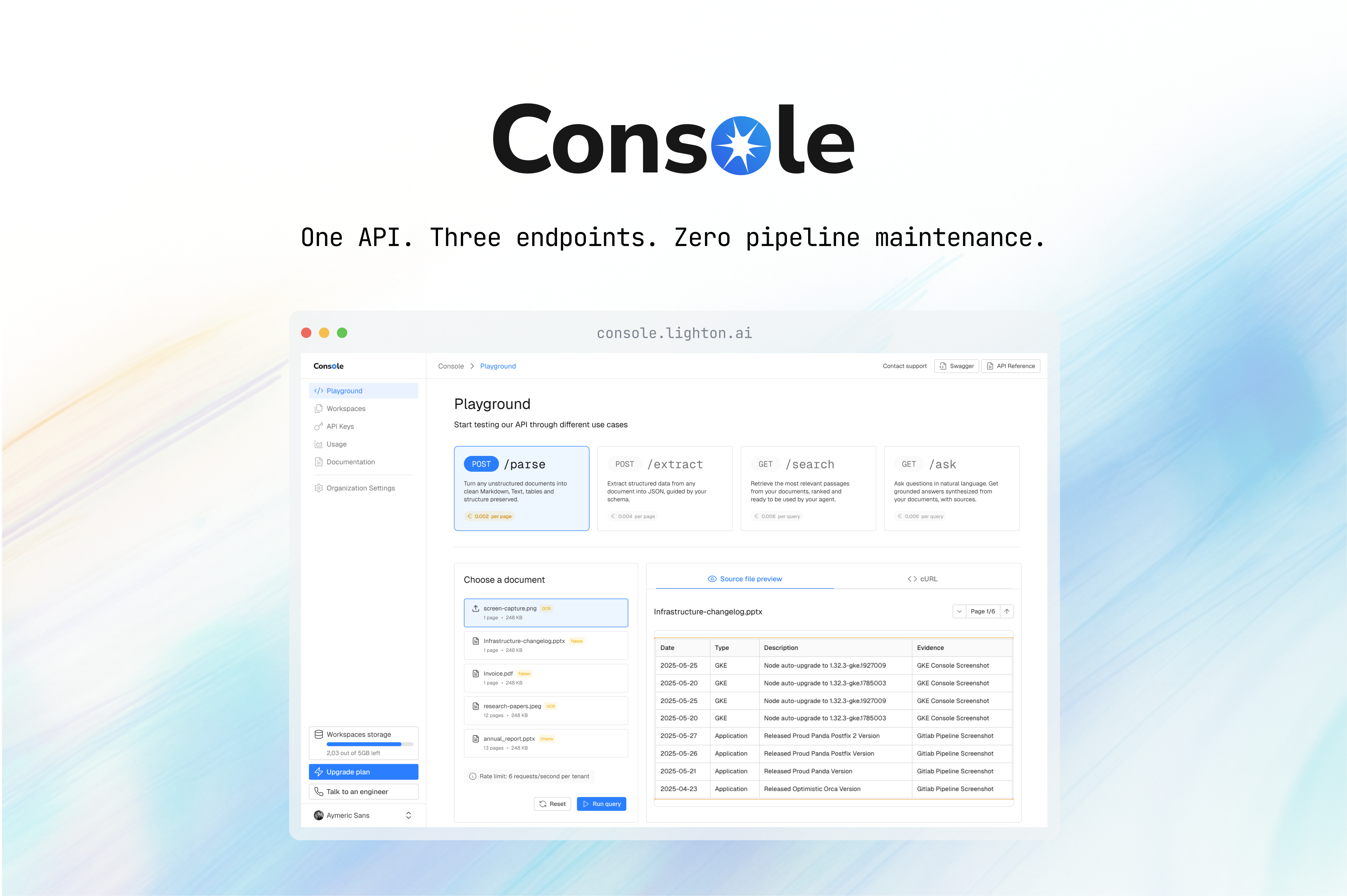

Tools & infrastructure

- PyLate: train and fine-tune late-interaction models

- ColGrep: semantic code search for your terminal and coding agents

- NextPlaid: local-first multi-vector database

Benchmarks & context

Reason-ModernColBERT was developed by Antoine Chaffin, Research Engineer at LightOn.

.svg)

.avif)

.avif)