TL;DR

EDiTh is an open benchmark for enterprise document retrieval, released today on Hugging Face. It is built around Véracier Industries, a fictional €1.8B French industrial group whose document estate reproduces the multi-entity, multi-language, multi-format complexity of a real multinational.

- Dataset: https://huggingface.co/datasets/lightonai/veracier-industries

- 1,004 unique PDFs · 1.7 GB · 6 languages · 3 formats

- 36 use cases with full answer keys, organized by stakeholder role

Why this benchmark exists

Important Notice

This dataset does not contain real documents. EDiTh is a fully synthetic corpus created for research and demonstration of retrieval capabilities only. Véracier Industries S.A., its subsidiaries (including Précis-Tec S.A.), employees, contracts, certifications, and all associated entities are fictional. Any resemblance to real companies, individuals, or agreements is coincidental. The dataset is intended solely as a benchmark for evaluating enterprise search and RAG systems. It must not be used as a source of factual information, legal reference, or business intelligence.

The Edith dataset exists to show customers what a modern retrieval pipeline actually delivers: not search results, but decisions grounded in their own documents. Our goal is for executives and management to get answers to their hardest questions, the kind that usually require a team, a week, and a stack of PDFs to resolve.

There's a structural reason this dataset had to exist. The documents that matter most inside a company, contracts, technical specs, regulatory filings, internal memos, are precisely the ones an ISV can never see. And the sensitivity isn't about the content of any single document: most are individually innocuous. The sensitivity comes from aggregation and context. A procurement memo, a supplier list, and a maintenance schedule are each unremarkable on their own; together, shared with an outside vendor for a proof of concept, they describe the operational posture of a business. That is why, in practice, almost nothing leaves the firewall. Demonstrating that a retrieval system works on this kind of content has traditionally required being embedded inside the customer, running pilots under NDA, and doing substantial integration work before anyone can judge whether the system is any good. That asymmetry is why public benchmarks have drifted toward academic corpora and generic QA: they're what's available, not what's representative.

Edith takes a different route. Rather than wait for access that will never come, we used Claude to generate a corpus of realistic enterprise documents, the kind of material that typically sits behind a firewall, along with the difficult questions an executive or analyst would actually ask of it, and the answers grounded in those documents. The result is a benchmark that looks like work, not like a test set. It lets us demonstrate retrieval quality on content that reflects how companies actually operate, without needing an NDA to do it.

Retrieval systems are typically benchmarked against academic metrics that measure ranking quality in the abstract. Those numbers rarely capture what matters inside a company: whether someone in finance, operations, or the executive team can move from a question to a defensible answer without leaving the document corpus. Edith closes that gap, reframing retrieval as what it should be: infrastructure for document-to-decision reasoning, not another leaderboard entry.

The deeper point is that the same logic that forced Edith to be synthetic is the logic that shapes how Paradigm is deployed. If a handful of mundane documents become sensitive the moment they aggregate, then the system reasoning over the full corpus can't sit outside the perimeter either. Document-to-decision infrastructure has to run where the documents already live, inside the customer's environment, under the customer's control. Edith lets us prove the capability in public. Paradigm is how that capability shows up in production, without anything ever having to leave.

What you get

Today's release consists of three components.

The document corpus. 1,004 PDFs spanning seven subsidiaries across five countries, with a further 320 documents inherited through the in-progress Précis-Tec acquisition. The corpus mirrors the composition observed in enterprise document stores: a majority of searchable PDFs, a significant minority of scanned files including handwritten annotations and visual degradation, and a mixed subset combining both. Six languages are represented, with substantial French, English, and German content and a smaller volume of bilingual, Italian, and Spanish material.

The use cases. 36 scenarios covering strategic, legal, regulatory, quality, defense, supply chain, and HR workflows. Each use case specifies a persona, a question, the expected retrieval set, the ground-truth answer, and the failure modes under evaluation. Use cases are designed to exercise capabilities that generic benchmarks do not measure: cross-entity reasoning, temporal reasoning, terminology variance across jurisdictions, and resilience to scanned and degraded inputs.

Dataset at a glance

The scanned subset includes handwritten annotations, stamp marks, multi-column layouts, and visual degradation consistent with documents that have been photocopied across multiple generations. Each scanned document was rendered and, where applicable, physically printed and rescanned to produce realistic artifacts.

The bilingual subset matters more than its percentage suggests. These are real bilingual documents: French supplier contracts with English governing-law clauses, German procedural documents with English technical appendices. The kind of thing every European multinational has, and nothing in current public RAG benchmarks represents.

How it's organized

The dataset is structured by stakeholder role. Each use case maps to a defined persona inside the Véracier universe: the executive who would own the question, the document set they would typically consult, and the failure modes specific to their context.

Nine roles are represented: CEO, CFO, CTO, General Counsel, CISO, CHRO, CPO, Quality Director, and Compliance Officer. Each role carries a different retrieval profile. The CFO use cases require temporal scope reasoning and threshold filtering. The CISO use cases require security classification extraction across IT asset inventories. The General Counsel use cases require cross-language clause detection, including exclusions that look like coverage until read carefully.

The baseline run

The reference results were produced using a single configuration: LightOn API as the retrieval and orchestration layer, with Claude Opus 4.6 as the reasoning model. Each use case was processed with a single API call. No external orchestration, fine-tuning, or human-in-the-loop intervention was involved.

Headline

Breakdown by subsidiary

The 36 Use Cases

Thirty-six use cases. Each one is a question an executive actually brings to a meeting. The kind that usually requires a team, a week, and a stack of PDFs to resolve.

The corpus doesn't cooperate: documents in five languages, scanned files with handwritten amendments, contracts with exclusion clauses that read like coverage until you parse them carefully. Each use case specifies the persona, the question, the expected retrieval set, and the failure modes under evaluation.

A sample:

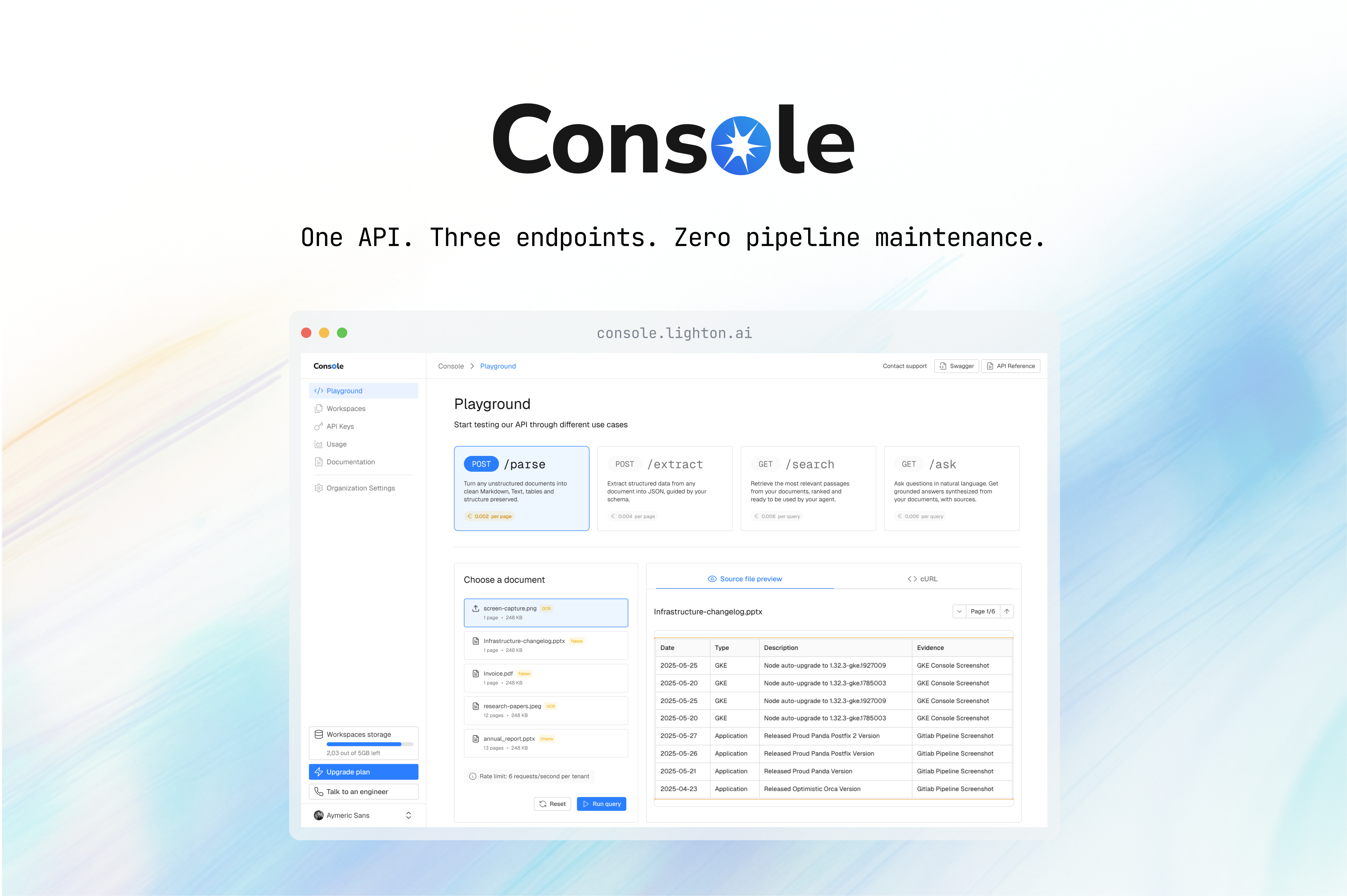

How LightOn API solved the use cases

Each of the 36 use cases was resolved end-to-end by a single LightOn API call. The API handles the full pipeline natively: multi-language retrieval across the corpus, reranking, reading of the relevant document chunks, and synthesis of a structured answer with citations, page references, and confidence scores. No external orchestration, chaining framework, or retrieval code surrounds the call. The integration is a single HTTP request in and a structured response out.

This design is what makes the sub-minute average per use case achievable. The retrieval, reasoning, and citation logic are co-located inside the API, which eliminates the round-trip overhead of separating them across services, and allows the agent to iterate searches and re-read chunks without network latency accumulating between stages. The same API is the interface through which LightOn customers run their own document-to-decision workflows in production.

Links

- Dataset: https://huggingface.co/datasets/lightonai/veracier-industries

- LightOn API: lighton.ai/api

- March announcement: lighton.ai/blog/edith

EDiTh is a project led by Adèle Guignochau and Igor Carron.

.svg)

.avif)

.avif)