TL;DR

The Finance Commons AMF OCR dataset (FC-AMF-OCR) is a large collection of financial documents with OCR annotations. It contains over 9.3 million images, providing researchers and AI developers with data to build better document processing models. This dataset fills a gap between academic research and real-world needs. It offers a wide range of financial documents, from reports to regulatory filings. FC-AMF-OCR aims to help improve AI systems for analyzing financial documents.

Dataset Summary

The FC-AMF-OCR dataset is a comprehensive document collection derived from the AMF-PDF dataset, which is part of the Finance Commons collection. This extensive dataset comprises 9.3 million images, each processed through Optical Character Recognition (OCR) using the docTR library. While native text annotations are available in the AMF-Text dataset, these annotations suffer from imperfections and inaccuracies, including mainly missing spaces, extra spaces, artifacts, etc. Additionally, the format of these annotations — presented as a single, continuous block of text without page demarcations — limits their utility for image-to-text tasks.

The FC-AMF-OCR dataset aims to address these limitations by providing:

- Full bounding box information for each element

- Confidence scores for individual words, lines, and text blocks

- Per-page annotations instead of a single block of text per document

- Solve the space inaccuracies in the native text annotations

Most existing large scale OCR datasets like the Industry Documents Library (IDL) or the PDF Association dataset (PDFA) suffer from a number of issues:

- Time Coverage: These datasets consist primarily of older documents or PDFs from specific periods, which might not reflect current trends or developments.

- OCR Engines: They use outdated or inconsistent OCR technologies, affecting the accuracy and reliability of text extraction.

- Further, some of these annotations are limited to what can be extracted and is readily available - text drawn in images and only present as bitmap renditions is missed entirely.

FC-AMF-OCR enhances existing datasets by offering detailed OCR annotations for a recent collection of text-rich documents from the French Authority for Financial Markets (AMF). It leverages the excellent open-source docTR OCR engine to extract text from various elements, including images and logos. By utilizing an open-source solution, FC-AMF-OCR ensures stability against API changes and allows users to implement custom filtering as needed. This approach provides researchers and developers with a reliable and transparent tool for comprehensive document understanding and analysis.

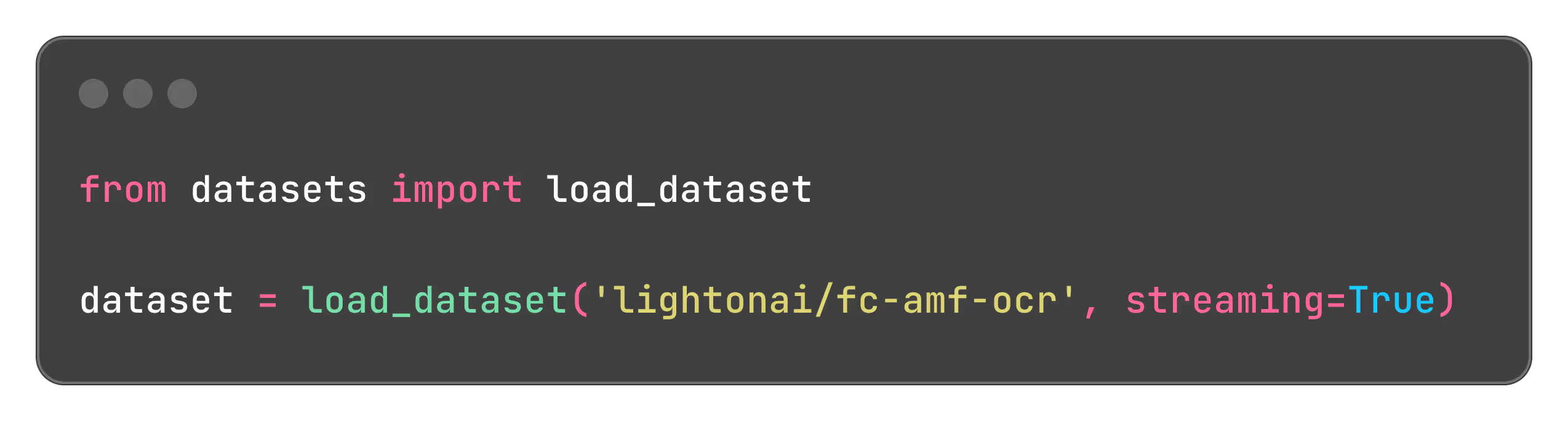

Following most large scale OCR datasets like IDL, this dataset is also in webdataset .tar format and can be used with the webdataset library in a seamless way. Concretely, each document exists as a pair of a pdf and a json.gz file containing the OCR annotation.

Approach

We start from the original dataset, which is a collection of 633,244 PDF files and apply some simple filters to remove files that are not relevant for training. The main goal is to have a dataset that is ready to use for large-scale training. We use the following filters:

- Corrupted files: we remove files that fail to be decoded correctly or that take too long to load.

- Page count: we remove files that have more than 500 pages. Large files take too long to load and render.

- Keep original quality: we apply no compression or rendering that would degrade the quality of the original PDF.

The basic filtering removes less than 1% of the original dataset. After the basic filtering:

- We selected the best performing models from the docTR library. For maximum accuracy, we keep all models in full precision(FP32).

- detection model : DBNet with a ResNet-50 backbone

- recognition model : CRNN with a VGG-16 backbone

- We use data-parallel to parallelize the OCR process over multiple GPUs. This is done by splitting the dataset into multiple shards and processing each shard in parallel.

- The recognition model is compiled with torch.compile to speed up the inference.

By default the images are rendered at a DPI of 144 for all the processing steps but we provide the original PDFs so users can render them at their preffered quality. Having access to the full PDF quality is very important for training robust models.

The dataset's page distribution is represented in the following histogram. On average, documents contain approximately 15 pages, while the median page count is about 2.

We also show the year distribution of the dataset. The dataset contains documents from 2008 to 2024. This shows that the dataset is relatively recent and covers a wide range of years which complements previous datasets.

Compute

The compute was carried out on an HPE Cray node with 8xH100, hosted on Orange Business Cloud Avenue.

Access the dataset on HuggingFace here.

Contact us today to discover how LightOn R&D Team can provide you a competitive edge in today's fast-paced and data-intensive market.

To reference this publication in your work, please use the following BibTeX entry:

@misc{FC-AMF-OCR,

title={FC-AMF-OCR Dataset : LightOn releases a 9.3 million images OCR dataset to improve real world document parsing},

author={Taghadouini, Said},

organization={LightOn},

url={https://www.lighton.ai/lighton-blogs/fc-amf-ocr-dataset},

year={2024}

}

.svg)

.avif)

.avif)